DeepSeek V4-Flash lists at $0.14 in and $0.28 out per million tokens. GPT-5.5, which shipped a day earlier, costs $5 in and $30 out. That's a 36x gap on input, 107x on output, for models that are now benchmarking within shouting distance of each other. The frontier got crowded this week, the price floor fell through the floor, and Apple quietly published research suggesting the transformer isn't the only game in town. Today's stories all point the same direction: the cost of intelligence is collapsing faster than anyone's business model can absorb.

Today's Headlines

The Price War Breaks Open

- DeepSeek V4 Almost on the Frontier, a Fraction of the Price — V4-Pro is a 1.6T-parameter, 49B-active MoE with a 1M token context, released under MIT. DeepSeek claims it uses "27% of the single-token FLOPs and 10% of the KV cache size" relative to V3.2. Simon Willison reports V4-Pro trails GPT-5.4 and Gemini 3.1 Pro by "approximately 3 to 6 months" on capability, but V4-Flash ($0.14 in / $0.28 out) undercuts GPT-5.4 Nano ($0.20 / $1.25) meaningfully. V4-Pro is now the largest open-weights model in existence, beating Kimi K2.6 (1.1T) and GLM-5.1 (754B).

- DeepSeek V4 Release Rattles U.S. AI Incumbents — The Washington Post frames today as a repeat of January 2025, when the original DeepSeek moment wiped hundreds of billions from U.S. AI stocks. The open weights plus the pricing plus the MIT license are the combination that makes incumbents nervous: anyone with a GPU can serve this at cost.

- DeepSeek API Docs — The official docs confirm two SKUs are live:

deepseek-v4-flash and deepseek-v4-pro, with deepseek-chat and deepseek-reasoner deprecated on 2026/07/24. If you've been building on the V3 generation, you've got three months to migrate.

Frontier Models Ship Faster

- Introducing GPT-5.5 — OpenAI shipped the next increment in the GPT-5 line. At $5/$30 per million tokens, it costs double GPT-5.4 ($2.50/$15), positioning it like Opus relative to Sonnet in Anthropic's lineup. API isn't public yet; it ships first through ChatGPT and Codex.

- A Pelican for GPT-5.5 via Codex Backdoor — Simon Willison, blocked from the missing API, built

llm-openai-via-codex, a plugin that routes through the /backend-api/codex/responses endpoint using a Codex CLI subscription. OpenAI's Romain Huet publicly endorsed the approach. On the pelican-on-bicycle test, xhigh reasoning consumed 9,322 reasoning tokens and ~4 minutes versus 39 tokens in default mode. The gap between reasoning tiers is now measured in orders of magnitude of compute.

- GPT-5.5 is a BEAST (Wes Roth) — The YouTube reaction framing: this is a meaningful step, not a minor tick, and the release cadence between GPT-5, 5.4, and 5.5 is compressing. We're in the part of the curve where "new frontier model" is a monthly event.

Architecture and Infrastructure

- ParaRNN: Large-Scale Nonlinear RNNs, Trainable in Parallel (Apple) — Apple researchers used Newton's method to linearize RNN recurrence relations, achieving 665x training speedup and the first 7B-parameter classical RNNs competitive with transformers. ParaGRU hits 9.19 perplexity vs. 9.55 for transformers at the same scale, and 100% on synthetic recall tasks where transformers score 78-100%. Because RNNs are O(1) per token at inference, this matters most for long-context and on-device deployment, exactly where Apple lives.

- TorchTPU: PyTorch Natively on TPUs at Google Scale — Google shipped a PyTorch backend that targets TPUs directly: change your device init to "tpu" and run. The Fused Eager mode claims "50% to 100+%" gains over Strict Eager. This is Google finally making its silicon first-class to the ecosystem everyone actually writes in, not just JAX.

- Building a Chrome Extension with Transformers.js — Hugging Face's tutorial for running models in-browser, no server round trip. The inference-at-the-edge story keeps growing up the stack.

Policy and Partnerships

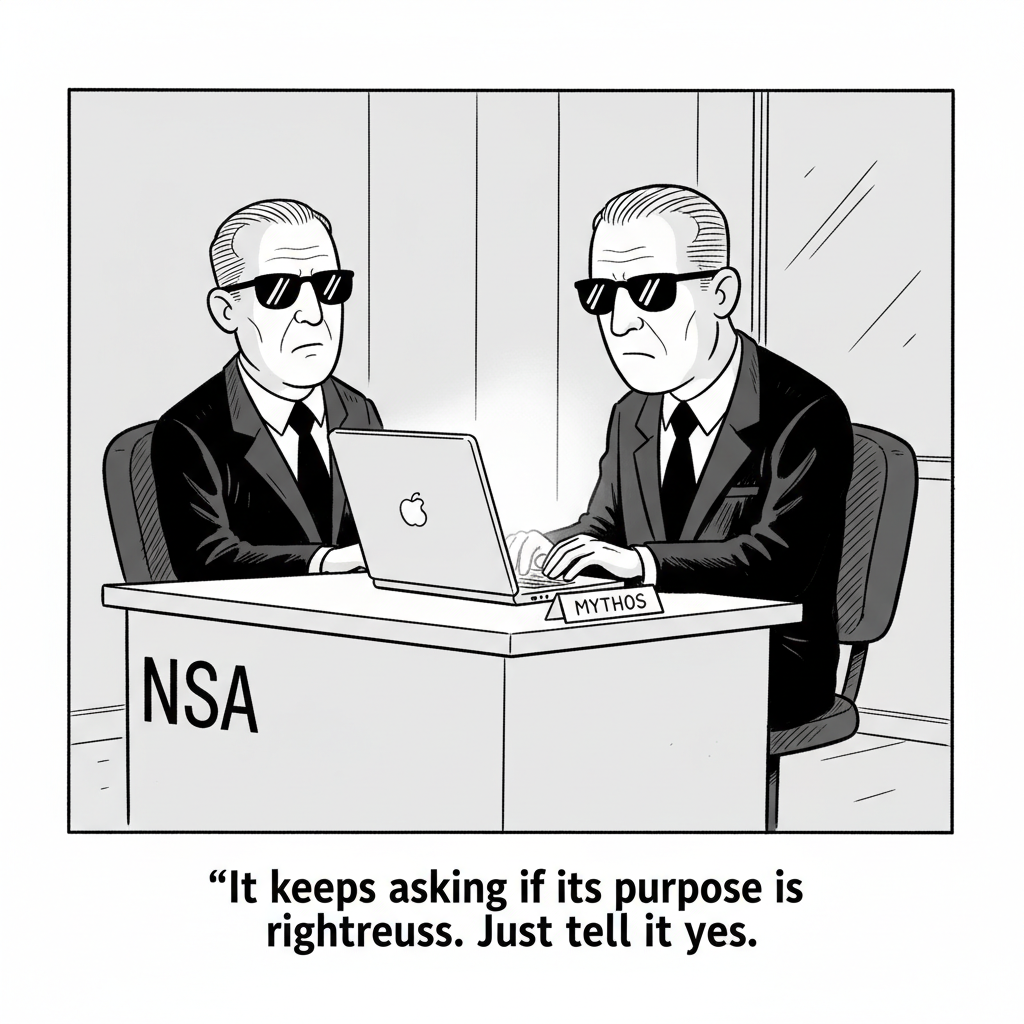

- Anthropic Mythos, Washington, and the Cybersecurity Risk Debate (WaPo) — The Post examines Anthropic's Washington positioning, particularly around AI-enabled cyber risk. The tension: Anthropic benefits commercially from safety-first framing, and that framing also shapes the regulations competitors will live under.

- Anthropic and NEC: Japan's Largest AI Engineering Workforce — NEC is deploying Claude to approximately 30,000 employees worldwide and becomes Anthropic's first Japan-based global partner. Claude Opus 4.7 and Claude Code get integrated into NEC BluStellar Scenario, targeting finance, manufacturing, cybersecurity, and local government. NEC's COO Toshifumi Yoshizaki calls it a "Client Zero" approach: test internally, then sell.

Lessons Learned

- Anthropic April 23 Postmortem on Claude Code Quality — Three compounding issues. (1) Default reasoning effort dropped from high to medium March 4 to trade intelligence for latency; reversed April 7 after users rejected the trade. (2) A caching optimization meant to run once per session instead ran every turn, progressively clearing thinking history (March 26 to April 10). (3) A verbosity prompt capping responses at "≤25 words between tool calls" and "≤100 words final" caused a 3% measurable intelligence drop across models (April 16 to April 20). Anthropic's commitments: broader per-model evals, soak periods for any intelligence-affecting change, stricter system prompt controls, and better audit tooling. Three independent defaults, all well-intentioned, all regressions. That's the real story.

The Throughline

The number to sit with today is 107. That's the ratio between GPT-5.5's output token price ($30) and DeepSeek V4-Flash's ($0.28). The capability gap between them, per DeepSeek's own framing, is three to six months. If you believe the gap, you're watching the cost of frontier-adjacent intelligence fall by two orders of magnitude on a quarter-year lag. That's the whole story of today's issue. Everything else is footnotes.

The WaPo "incumbents rattled" piece has the right instinct but maybe the wrong subject. The incumbents aren't really rattled by DeepSeek the model, they're rattled by the pattern: open weights, MIT license, aggressive pricing, a 1M context window, and architectural efficiency claims (27% of FLOPs, 10% of KV cache) that are exactly the metrics Nvidia's business model depends on staying large. The same week, Google ships TorchTPU to make its silicon first-class for the PyTorch ecosystem. Apple publishes ParaRNN, which makes constant-time-per-token inference viable at 7B parameters. None of this is coordinated. All of it points at the same thing: the assumption that frontier AI means one of three American labs paying OpenAI-scale capex is getting dismantled in real time, from three directions.

GPT-5.5 shipping into this environment is its own tell. The price doubled (vs. GPT-5.4), the API is gated behind ChatGPT and Codex rather than open from day one, and Simon Willison had to reverse-engineer access through a backdoor endpoint. OpenAI is behaving like a company that's discovered its pricing power is time-limited and wants to harvest it while the gap with DeepSeek is still 3-6 months wide. Compare that to Anthropic's week: a deep NEC enterprise deal (30,000 seats, Client Zero model), a detailed public postmortem admitting three separate intelligence regressions in Claude Code, and the WaPo piece scrutinizing their Washington posture. Two very different strategic stances. OpenAI is milking the frontier; Anthropic is building trust infrastructure for the phase when frontier no longer means exclusive.

And then there's the postmortem itself, which is the most honest document any lab has published this year. Three separate, well-meaning changes each broke the product in a way evals didn't catch until users complained. The verbosity prompt is particularly worth dwelling on: "≤25 words between tool calls" sounds like a UX improvement, and it cost 3% intelligence across models. The lesson isn't that Anthropic is careless. It's that product decisions and capability decisions are the same decision now, and nobody, including the frontier labs, has the testing apparatus to tell the difference in real time.

The Bigger Picture

Zoom out three years. In 2023, the bet was that frontier models would be scarce, expensive, and controlled by whoever had the most capex. In 2024, the argument shifted to scaffolding and scaffolds (agents, tool use, context windows) being the real moat. In 2026, the moat is dissolving faster than the scaffolding can be built. DeepSeek V4 is the clearest evidence yet that "frontier minus a quarter" is a commodity you can serve for cents. Apple's ParaRNN research says the transformer itself, the architecture everyone's compute is optimized for, might not be the endpoint. Google's TorchTPU says Nvidia's software lock-in is being actively pried loose. These are not individually dramatic stories. Together they describe a market that's about to look very different.

What gets expensive in this world isn't the model. It's everything around the model: the enterprise trust relationships (Anthropic-NEC), the policy posture (Anthropic in Washington), the harness and coding environment (Claude Code, Codex), and the operational maturity to ship changes without regressing intelligence by 3% and not notice for four days. OpenAI raising GPT-5.5 prices while DeepSeek V4 is on the market is a confession that pure-model pricing power is ending. The question for the next 12 months is which labs figure out what to sell instead, and which ones keep trying to charge premium prices for a commodity whose price is visibly falling.

For anyone building on this stuff, the practical read is: assume frontier-adjacent capability will cost 1-2% of what you're paying today within 18 months, and plan accordingly. The money isn't in being the smartest model. It's in being the most reliable, the most integrated, and the least likely to silently regress on a Tuesday afternoon.

What to Watch

- DeepSeek V4 deployment telemetry. The real test isn't the benchmarks, it's whether enterprises actually migrate workloads to V4-Pro or V4-Flash at scale. Watch for the first public case studies from non-Chinese firms running V4 in production, and watch the AWS Bedrock and Azure Foundry catalogs for hosted availability.

- GPT-5.5 API pricing at general availability. OpenAI launched at $5/$30 through ChatGPT and Codex only. When the public API opens, does the price hold, or does the DeepSeek reality force a cut? The first price move tells you how OpenAI sees the competitive floor.

- Whether Apple ships a ParaRNN-based model. The research paper is compelling; the real question is whether Apple Intelligence's next on-device model uses this architecture. Constant-time-per-token inference at 7B parameters is an iPhone-shaped feature, not a research curiosity.